What can teachers actually do?

12 January 2018

By Guest

Reading time: 3 minutes. School-based writer: Share feedback and comments below

Ben White of the Ashford Teaching Alliance and CEBE (Coalition for Evidence-based Education) reveals the flaws in complex data management systems when applied to classroom teaching

What can teachers actually do? Well, they can:

- explain things

- ask questions

- direct behaviour (in lesson) and to some extent outside of it.

– or ‘just teach’ (as @Teacherhead writes) The rest is arguably noise. More of this later.

Data management processes and systems take significantly less teacher time than planning and marking, but they make up a high proportion of workload. In fact, workload and the hours which teachers report working are less closely related than you might imagine. Recently published work by Sam Sims for the DfE showed little correlation between reported hours working and perception of how reasonable workload was.

The problem is it’s easy for those of us in leadership roles to introduce demands which (on paper/digitally at least), link to our core purpose: driving up standards/progress, etc. But teachers see them as a hindrance.

This is because we can fail to see the complexity behind the summaries and reports which to us are a few mere key strokes away. They give the impression that we can know things which are probably not knowable.

Simple figures hide complex reality

Complex data management systems can lead to a simplified version of management which prioritises neatly shifting numerical values over the much tougher (and less predictable) realities which lie behind these numbers.

The precision and accuracy promised by some of the data management processes included in our recent research projects seem to run against the uncertainty and unpredictability inherent to classroom teaching.

There is a risk in this context that highly rationalised data management systems could actually have a limited or even negative impact when they come into contact with the complex realities of classroom teaching.

A key message from our data workload interviews* was the imperative for leadership teams to have a firm grasp of the reliability and validity of the data which they are acting upon.

We can start by engaging fully with the following three claims:

- Individual grade predictions are not particularly accurate. The more precise we try to make them, the less accurate they will be. The finer grade the predictions, the less accurate.

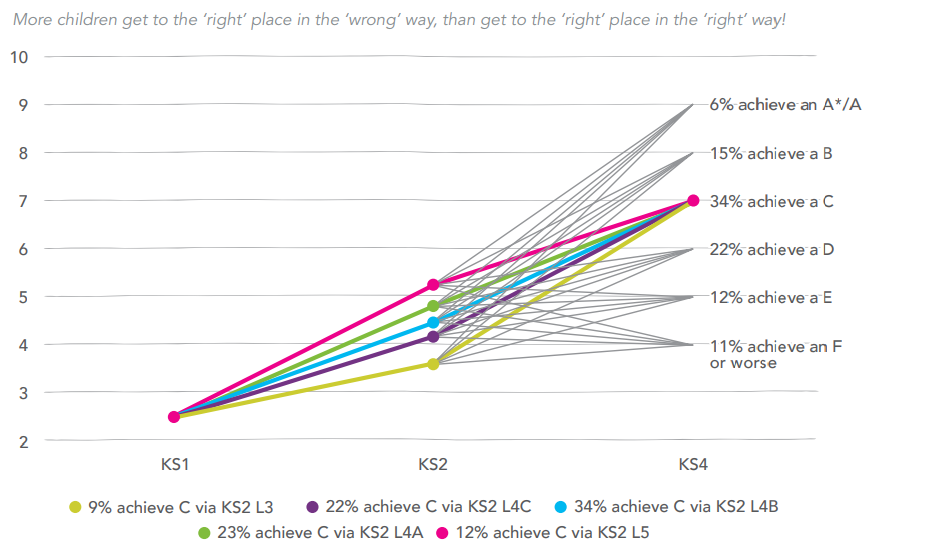

- Flight paths are not accurate for individuals. The more precise they are, the more wrong they are likely to be. There is no should about it. Pupils with lower KS2 grades, for example, will gain lower GCSES – on average. But numerous individuals with low KS2 grades will outperform others with high KS2 grades. This graph helps illustrate the reality behind the ‘average progress’ line (full report)

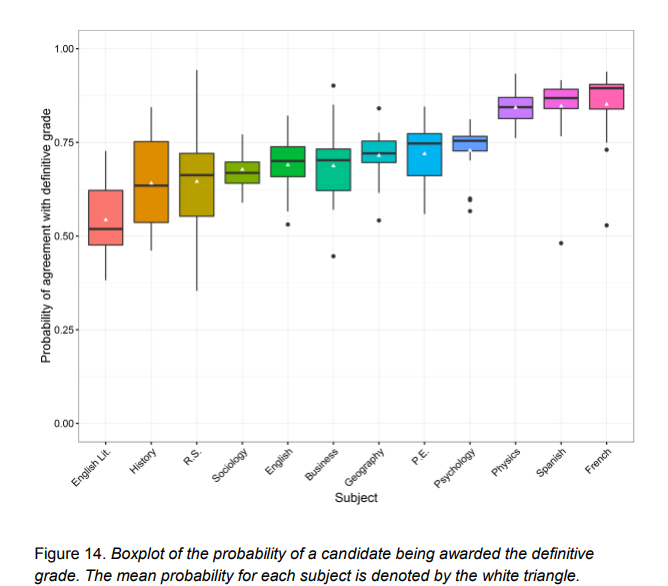

- Annual exam results are naturally quite variable – and (in some cases) remarkably unreliable. This Ofqual report, for example, shows just how unreliable exam grading can be (whole report).

Failing to understand the above makes it likely that we’ll pick ‘the wrong kids’ in order, potentially, to conduct an ill-considered intervention. Often this is premised on the idea that they should be progressing more in line with an aggregated average of pupils who share a couple of prior assessment scores with them. Or we could make knee-jerk reactions to grade variations which might not mean what we think they mean.

Predicting the future?

In reality, it’s actually very hard to know what sort of teaching and learning is happening. It’s even harder to predict the future based on the little we can know.

As Dr Rebecca Allen put it in this year’s Caroline Benn Memorial Lecture, despite the impression technology and tracking systems may give, ‘Auditing teaching and learning isn’t really possible… a headteacher cannot know what is going on in a classroom, unless they are there.’

Teachers daily live with the uncertainty and unpredictability of actual teaching. It’s part of what makes the job so rewarding (and frustrating). Subjecting staff to a system which overlooks this uncertainty is doing their professionalism a disservice and probably has a negative impact on their experience of being a teacher.

‘If you over–complicate it, or you try and drill too deep into it, you actually draw faulty conclusions. Worse, in time you lose half the staff, who just switch off after a while. I think there is a risk with data.’ (Headteacher, secondary school)

To repeat, teachers can: explain, ask questions, direct pupil behaviour. Terms like intervention, target, focus, boundary pupils, etc, can easily become mere lip-service to accountability measures. And, more likely, synonyms for worry, unless they link directly to the above actions: explaining something differently (or again). Asking different questions. Directing helpful behaviours (encouraging pupils to act in ways which will help them learn).

It probably takes a subject specialist to carry out the first two actions (explaining, asking questions). Senior leaders could help facilitate this support, but as non-specialists are not best placed to directly help. We could however focus on ensuring that all pupils behave (in school and in their own study time) in ways which will help them learn, and that teaching staff are freed up to improve the quality of their explanation and questioning.

Arguably, the rest is just noise.

Highly rationalised data management systems could have a negative impact when they come into contact with the complex realities of classroom teaching

*The interviews were conducted with over 30 teachers between January and March this year, the teachers and senior leaders were working in a range of primary and secondary school contexts in the south-east of England. They formed part of a research project exploring teacher perception of data related workload. Due to be published by DfE and NCTL in the Spring.

Read more about reducing teacher workload on the SSAT blog: Reducing teacher workload – do’s and don’ts.